Date: 01/10-01/11/2022

3D Scans Showcase:

Please note that these models might take some time to load, and the loading time depends on how fast your network is.

Scaniverse

Polycam

3D Scanner App

If you confirm to vote, the data will be used for research purposes for Yuan Gao’s PhD project at the University of Leeds. The information sheet and consent form are available. Please check the documents before contributing data. By clicking the “Vote” button, you will automatically show your agreement to the consent. You can withdraw your data anytime before submitting it (clicking the “Vote” button). The data collection is anonymous, therefore, it cannot be withdrawn from the data pool after you click the “Vote” button. Please vote carefully. Thank you.

Revopoint POP3

I failed to scan machines with this scanner. I began to question whether the actual operation of the scanner was as easy and efficient as the product description and the YouTube product test videos, so I switched the scanner to one of the product’s recommended targets: people. In order to see how well the product scanned depth, material and detail, I asked my subjects to wear dark clothing, metal jewellery or sit on a chair with thin legs. Even though I strictly adhered to the scanning distance requirements of the scanner’s accompanying software and referred to several instructional videos about the scanner, after several attempts (averaging one hour for a single subject), the results were still unsatisfactory.

Reflective Diary

The Calderdale Industrial Museum (CIM) is a volunteer-run institution often constrained by limited time, funding, and technical expertise. This project is a technical test of both the capabilities and limitations of mobile 3D scanning. In this practice, my aim was to investigate the capabilities of consumer-grade technologies in creating visualised digital replicas of industrial machines, and then reflect on how they might reshape our understanding of labour and curatorship within the context of digital heritage.

I continued working with the iPhone 13 Pro for the same reason shown in Practice Log 1. I also selected three mainstream 3D modelling apps (Scaniverse, Polycam, and 3D Scanner App). The reason for choosing these apps was not only because of their accessibility and compatibility with phones, but also because they had been referenced in previous academic studies in recent years (Smrčková et al., 2024; Benítez Hernández & López Consuegra, 2024; Cascarano et al., 2025). However, those studies often focused on domestic objects, architectural spaces, or abstract forms, but not industrial heritage, even though it is a very important part of British history and the history of the world. Therefore, this practice was an attempt to re-contextualise these tools within a real museum setting to test how well they perform under conditions of complexity, clutter, and curatorial constraint.

Apart from the tools and technologies above, I also tested the Revopoint POP3 handheld scanner, which uses the Revo Scan App on a mobile phone to model after scanning. This is also a low-cost handheld device marketed to non-professional/commercial users. I did not initially choose it for exploring 3D scanning technology because I never paid attention to handheld scanners mentioned in 3D modelling literature within a museum context when I started this research, and I could not imagine that such a technology could be linked to low-price technologies. Quite by chance, after meeting artist Dave Lynch from Immersive Networks, he mentioned that he had purchased a Revopoint POP3 for one of his own projects. I then began to research information related to handheld scanners. Compared to commercial-grade handheld scanners, this scanner’s official website introduces the product as capable of scanning small to medium-sized objects (https://www.revopoint3d.com/pages/portable-3d-scanner-pop3), and YouTubers have been showcasing scans and showing the process of scanning industrial parts and objects. In the beginning, I thought that a car was not similar in volume to many industrial exhibits in the museum, but had finer, smaller parts, which could be seen as a combination of the successful cases on the official website and YouTube videos. Furthermore, its price is considerably cheaper than an iPhone 13 Pro. Based on the capabilities and price advertised I have seen, I added this device to my list of test subjects.

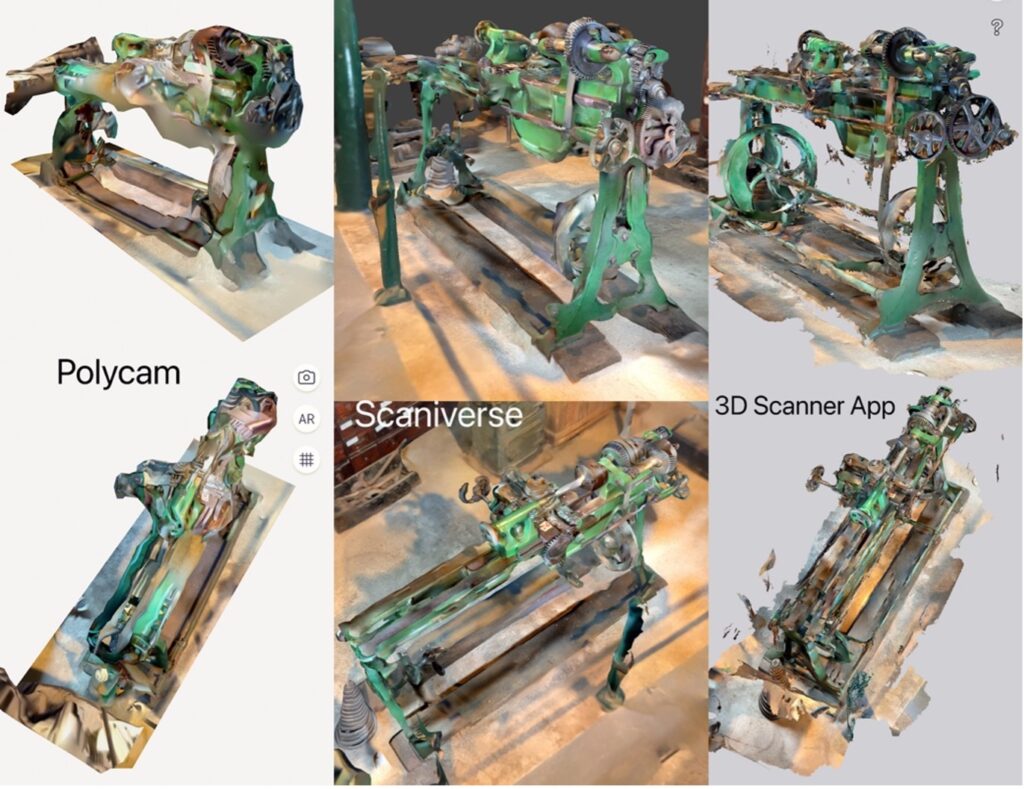

The visual outcomes were mixed (see image 1 below). Although Polycam is popular online and received positive feedback from academic research (e.g. Vacca, 2023), its performance was disappointing in my practice. However, the lesser-known 3D Scanner App produced surprisingly consistent results. This was mainly because LiDAR-based scanning typically struggles with dark, reflective, or transparent surfaces, even under ideal conditions (Balado et al., 2024; Umezu et al., 2023). Meanwhile, despite following the product’s official guidance, the Revopoint scanner failed to produce usable models due to its narrow optimal scanning distance and sensitivity to surface occlusion. This raised questions for me about the real effectiveness of consumer-grade 3D scanners for reproducing complex objects and led me to start considering: after data is input into these software applications, how do they analyse and piece this data together? Is there a way to know which software might provide better scanning results without opening the “black box” that involves commercial secrets?

Image 1. Scanning results from the three apps.

Due to the data collection characteristic of a LiDAR scanner being a point cloud, the density of the point cloud determines the clarity of the model, and the distance between the points establishes the model’s surfaces (faces). The density of the iPhone 13 Pro’s LiDAR scanner has not been publicly disclosed and can only be understood through some review videos. Among them, Rami Tamimi’s (https://youtu.be/R9lGi4wMdQQ?si=tovN3kv6z39Uu6jY) test of the iPhone 13 Pro’s LiDAR scanner accuracy showed it to be good compared to a professional surveying tool station. However, since the scanner is a constant in this study, the LiDAR scanner’s accuracy is not the reason for the disparity in the modelling results of the three apps, and thus will not be discussed in depth.

To understand the potential reasons for the differences in these model outcomes, one needs to understand how 3D models are constructed. In short, during the 3D scanning process, the 3D scanner uses triangulation, which is a geometric principle, to determine the distance to points on the surface of the objects. Then it captures a multitude of points, forming a point cloud. Then, these points are connected as an interconnected triangle network to create a mesh, and each triangle represents a small, flat surface area of the scanned object. This means, the more triangles that are used in the mesh on average (higher resolution), the more detailed and accurate the representation of the object’s surface will be.

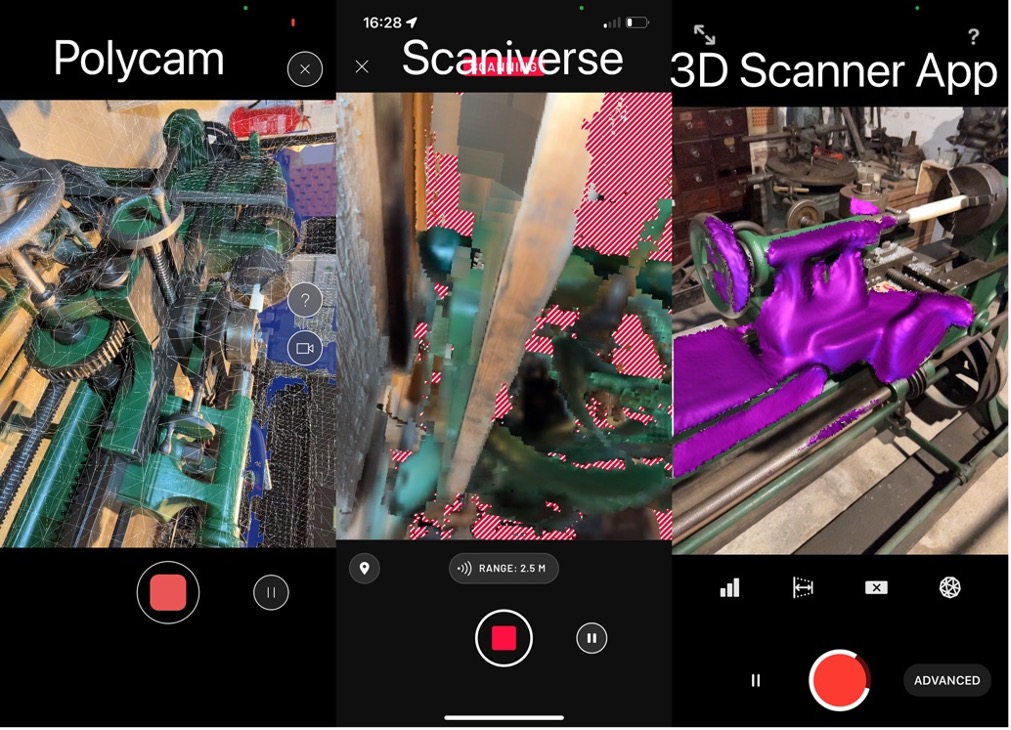

After observing the visual feedback of the scanning progress (see image 2) presented to the user by the three apps’ front-ends and the final scan results, I propose a hypothesis: the visual feedback of the scanning progress is directly related to the scan results and reflects the precision of the scan results.

The interface, during use, is a guide that helps the user identify which parts of the object have or have not been scanned. Different applications use different interface display methods to help users understand which parts of the object’s data have been acquired.

Image 2. Scanning in-process interfaces.

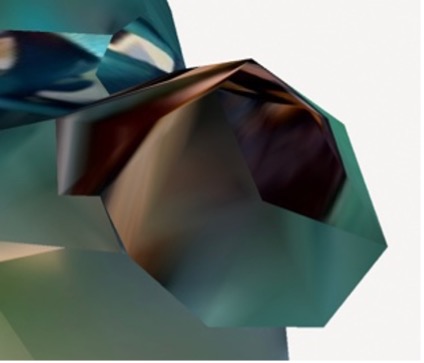

Image 3. The details of the scanned models from Polycam (left), Scaniverse, and 3D Scanner App (right).

After zooming in to view the details of each model (see image 3 above), it can be found that Polycam’s model is constructed based on low-density triangles, Scaniverse’s is constructed based on points, and 3D Scanner App’s is constructed based on high-density triangles and directly displays the device’s “understanding” of the scanned object in the form of colour. This is consistent with the visual effects in the scanning in-process interface and also aligns with the basic principles of 3D scanner model construction mentioned above. However, in terms of density, because Polycam’s density is the lowest and its method of triangle construction determines it, this leads to the widespread disappearance of curved shapes in the model. Therefore, compared to the latter two apps, Polycam’s result in this test was the worst. Similarly, although the increased density of Scaniverse’s point cloud enhanced the model’s detail, the need to use all the different points to form the triangular faces, while making the model look very complete, also caused parts that should be straight lines to be bent. In contrast, the 3D Scanner App’s data collection, due to its higher density and its lack of emphasis on a mandatory connection between every point, rendered curved lines relatively well. However, compared to the former two, the 3D Scanner App’s scans left many small, fragmented pieces unconnected to the main body of the object.

Therefore, it can be seen that there is a direct link between the scanning in-process interface of these apps and their scan results. At the same time, it can also be seen that Polycam is better at scanning environments, while the latter two are more suited for scanning small to medium-sized objects, with the 3D Scanner App demonstrating a better representation of curves. This might break the promotional hype and monopoly of the “best” 3D scanning app, encouraging users to try different products and enabling them to judge which app is suitable for which kind of 3D model based on their own cognition via the controllable user interface. However, in 2022, none of these three apps had the capability to scan thin or narrow objects like pipes or conveyor belts. Since the 3D scanning process is only controllable by the user during the scan and after receiving the result, in the absence of access to the classified information within the commercial black box, comparing the scanning in-process interface and the details of the finished product can help in understanding the app’s characteristics. In the current era of rapid technological development, these problems might soon be overcome. However, by reflecting on the limitations that small and medium-sized museums have for focusing on and testing digital technologies, I believe an effective contribution can be achieved only if the test happens within the museum environment.

To me, the clearest outcome of the experiment was not a certain answer about which scanner is better to use, but rather a realisation: the technological process is as much about the digital translation as it is about digital tools. Because scanning is not just about capturing, it is translating. It is mimicking how we see the world, but instead of the eyes and brain, it’s using lenses and an algorithm. This is an intimate negotiation between machine and modeller, also material object and digital model, which happens by circling the machine, adjusting distances, cleaning up meshes, and correcting distortions.

During this negotiation, labour is often hidden in the final products, for example, scans produced by larger institutions. But in small museums, it becomes fully visible and unaffordable. Every flaw in the model tells a story that can be about the artefact itself, or about the available resources, time, and skills. Therefore, digital heritage practices should not only be seen as acts of preservation, but also as situated performances embedded within networks of people, tools, and meaning (Harrison et al., 2020).

Reflective Methodological Note

In this practice, I was not only a technician but also a translator between analogue and digital ontologies, but also a practitioner embedded in the economic and cultural realities of heritage work. My role was hybrid: part novice, part facilitator, part reflective scholar. By consciously occupying a position close to that of actual museum staff, I could simulate the constraints they would face and, in the process, develop methods grounded in practicality rather than perfection.

This experiment also raised a deeper epistemological question: what does it mean to “know” an object through digital technologies? When failing to capture details while scanning, does that show a technical fault or a shift in representational logic? More importantly, does a model appear “real” or just act as a vessel for historical imagination?

I think, while visual fidelity is important, the ability of models to facilitate annotation, storytelling, and establishing meaningful connections to cultural and historical contexts is even more significant (Pietroni, 2025). Thus, I argue that although reconstructions are partial, meaning-making can still be holistic, which I will carry into future research.

These reflections shifted my understanding of scanning from data extraction to emotional craft. They reaffirm a key principle of practice-led research: knowledge is not only what we extract from tools, but also what we learn through struggling with them.

Reference

Balado, J., Garozzo, R., Winiwarter, L. and Tilon, S., 2024. A systematic literature review of low-cost 3D mapping solutions. Information Fusion. [Online]. 14(102656) [no pagination]. [Accessed 28 April 2025]. Available from: https://doi.org/10.1016/j.inffus.2024.102656

Benítez Hernández, P. and López Consuegra, I., 2024. 3D Scanning of an Architectural Sculpture Using a Smartphone with LiDAR Sensor: The Case of the Late Gothic Helical Stair. In: Moral-Andrés, F., Moral-Andrés, E., and Reviriego, P. eds. Decoding Cultural Heritage: A Critical Dissection and Taxonomy of Human Creativity through Digital Tools. [Online]. Cham: Springer Nature Switzerland, pp. 293-318. [Accessed 28 April 2025]. Available from: https://doi.org/10.1007/978-3-031-57675-1_13

Cascarano, P., Meglioraldi, J., Vallasciani, G., Armandi, V., Augello, G., Carradori, S., Hajahmadi, S. and Marfia, G., 2025. A Comparative Analysis of 3D Modeling Methods for Integration into an Extended Reality Platform. In: 2025 IEEE International Conference on Artificial Intelligence and eXtended and Virtual Reality (AIxVR), 27-29 January 2025, Lisbon. [Online]. IEEE, pp.213-217. [Accessed 28 April 2025]. Available from: https://doi.org/10.1109/AIxVR63409.2025.00041

Harrison, R., DeSilvey, C., Holtorf, C., Macdonald, S., Bartolini, N., Breithoff, E., Fredheim, H., Lyons, A., May, S., Morgan, J. and Penrose, S. 2020. Heritage futures: comparative approaches to natural and cultural heritage practices. [Online]. London: UCL Press. [Accessed 21 April 2025]. Available from: https://scholar.google.com/scholar_url?url=https://library.oapen.org/bitstream/handle/20.500.12657/51792/1/9781787356009.pdf&hl=en&sa=T&oi=gsb-gga&ct=res&cd=0&d=17349259995802372615&ei=LF4kaZHdH9OyieoPyofJ-QE&scisig=ABGrvjKL6nxx7_uvOZFwaypign80

Pietroni, E. 2025. [Pre-print]. Multisensory Museums, Hybrid Realities, Narration and Technological Innovation: A Discussion Around New Perspectives in Experience Design and Sense of Authenticity. [Online]. [Accessed 28 April 2025]. Available from: https://doi.org/10.20944/preprints202502.0440.v1

Smrčková, D., Chromčák, J., Ižvoltová, J. and Sásik, R., 2024. Usage of a Conventional Device with LiDAR Implementation for Mesh Model Creation. Buildings. [Online]. 14(5), pp.1279-1299. [Accessed 28 May 2025]. Available from: https://doi.org/10.3390/buildings14051279

Umezu, N., Koizumi, S., Nakagawa, K. and Nishida, S., 2023. Potential of Low-Cost Light Detection and Ranging (LiDAR) Sensors: Case Studies for Enhancing Visitor Experience at a Science Museum. Electronics. [Online]. 12(15), p.3351. [Accessed 28 April 2025]. Available from: https://doi.org/10.3390/electronics12153351

Vacca, G. 2023. 3D Survey with Apple LiDAR Sensor—Test and Assessment for Architectural and Cultural Heritage. Heritage. [Online]. 6(2), pp.1476–1501. [Accessed 28 April 2025]. Available from: https://doi.org/10.3390/heritage6020080