Date: 21/06/2024

Audio Tracks Showcase

There are data collections in this practice log. If you confirm to contribute, the data will be used for research purposes for Yuan Gao’s PhD project at the University of Leeds. The information sheet and consent form are available. Please check the documents before contributing data. You can withdraw your data anytime before submitting it (clicking the “Vote” button). The data collection is anonymous; therefore, it cannot be withdrawn from the data pool after you click the “Vote” button. Please vote carefully. Thank you.

Sound 1.

Sound 2.

Sound 3.

Sound 4.

Sound 5.

Sound 6.

Sound 7.

Sound 8.

Sound 9.

Sound 10.

Sound 11.

Sound 12.

Sound 13.

Sound 14.

Sound 15.

Sound 16.

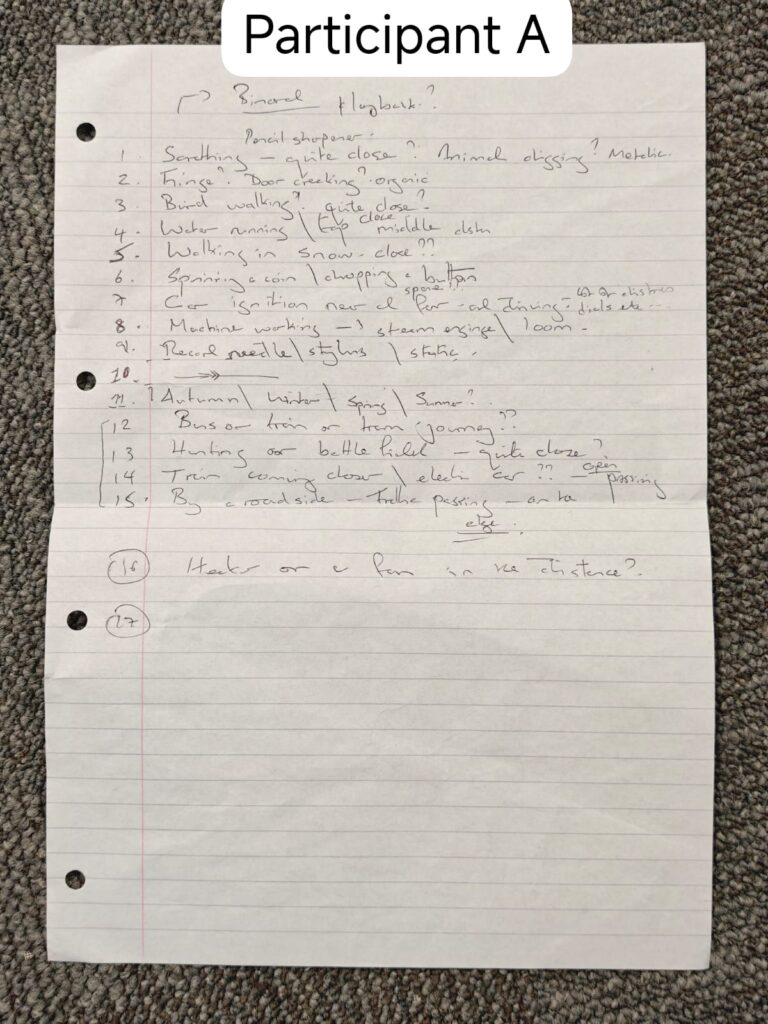

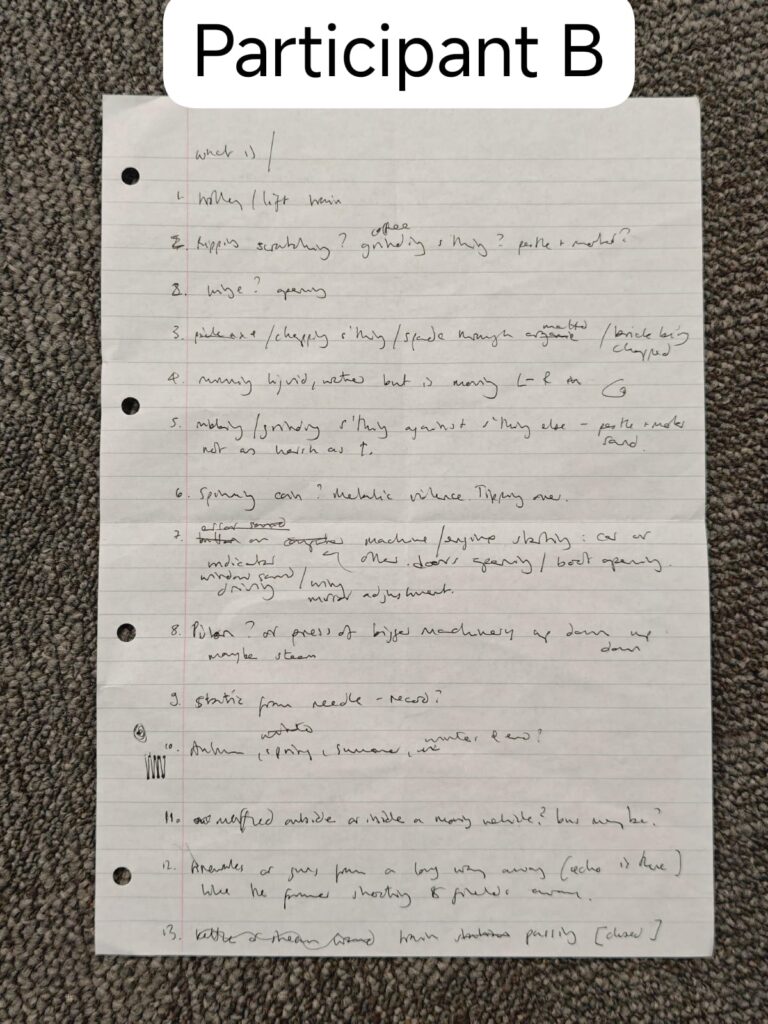

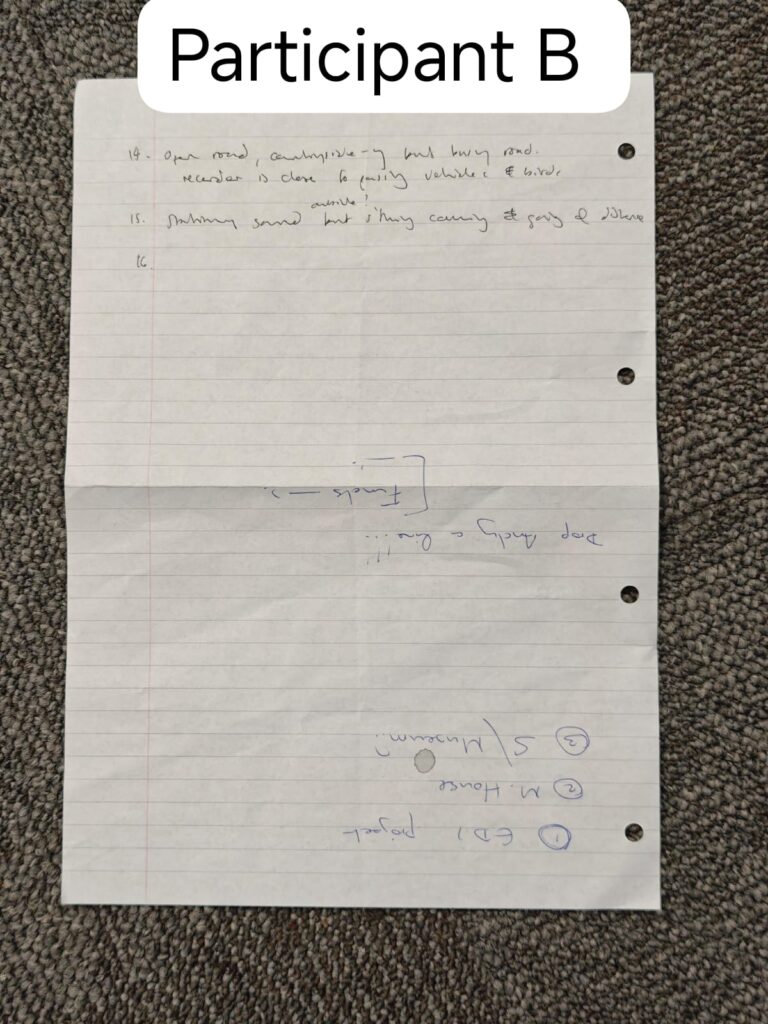

Test result (handwriting)

An example of data analysis for this practice is available here: https://docs.google.com/document/d/1h3jz33bCtaAKUw64nJBVg9vcnNgoyv8en8xwgssZE5g/edit?usp=sharing

Reflective Diary

This experiment aimed to explore how listeners interpret environmental audio in the absence of visual information, particularly how they map the soundscape semantically, spatially, and emotionally. The test consisted of 15 curated audio clips representing a range of spatial and cultural contexts: natural, industrial, domestic, and abstract. These clips were either recorded by me or from freesound.org (the majority) and YouTube. The recordings were presented in both stereo and mono formats to examine their differences in conveying auditory information and helping comprehension.

Given the exploratory nature of the test, I invited my two academic supervisors, both experts in digital heritage and immersive media/technology, to participate. The purpose was to observe how sound itself activates spatial, emotional, and cultural cognitive models, in order to form a hypothesis for subsequent research (for control groups in Practice Log 10).

The test was conducted in a quiet office environment, with participants using two types of headphones: Apple wired earbuds and Bose noise-cancelling earphones. This setup allowed me to assess perceptual responses under both standard and enhanced listening conditions.

Participants were asked to write down their understanding of the sound after listening to each clip, without being informed in advance about the sound’s source or background. This approach requires participants use their own life experiences without knowing contextual information, which was chosen to avoid framing effects (in this research, framing effects means limiting participant’s imagination by providing extra information) and to simulate the auditory orientation conditions (in this research, it means the use of sound to guide a visitor’s attention and assist with navigation) commonly found in immersive museum applications (Verhulst et al., 2024; Cliffe et al., 2024).

The results revealed (see figure below) significant variation in the accuracy of sound source identification, particularly for industrial and domestic clips. Sounds with strong cultural markers or that include multiple sound cues were generally interpreted correctly, whereas single sound cues applicable to multiple contexts often led to hesitation or misclassification. Furthermore, the post-experimental conversation with the participants revealed that they arrived at a coherent interpretation by matching distinctive sounds in the environment with memories from different senses in their minds. Does this indicate that the decoding process participants use when understanding a sound environment is based on: Observation – Marking Unusuals – Matching Memories – Interpretation? This may sound like a topic for neuroscience, but my focus is not on how the brain reacts, but on how this potential decoding process can be used to present more comprehensible soundscapes in online museums.

Additionally, this study proved that stereo recordings and a larger number of sound cues in the recording are more effective than mono and single sound source recordings in helping participants infer spatial layouts. But this raised new questions: in a virtual 3D environment, can recorders that capture stereo or multi-channel sound still provide directly usable soundscapes as they did in this test? If not, how should I understand the composition of a soundscape and construct it effectively? (This will be explored in Practice Log 10.)

Reflective Methodological Note

This test taught me how easily perception slips between recognition and imagination when only one sensory channel is active. My supervisors’ written responses revealed not just what they heard, but how they listened: what memories they drew on, what assumptions they made, what gaps they tried to fill.

In this project, I was again situated as both recorder and interpreter. Every sound clip I chose was not neutral, but it carried my intent, my framing, my biases. Just as a photograph reflects the photographer’s gaze, a soundscape reflects the recordist’s positionality (Berger, 2008; Sturken & Cartwright, 2001). I learned that even the “natural” in sound is constructed.

I also reflected on the epistemic friction between audio and visual interpretation. Visual information tends to be over-trusted (Baumann, 2015; cf. the Chinese proverb [眼见为实,耳听为虚]: “Seeing is believing; hearing is misleading”), while audio remains under-theorised in spatial cognition. Yet, the test revealed how sound, especially when presented without visual cues, forces the listener into an active, imaginative state. They are not just consuming, but constructing.

From a methodological perspective, this trial became a rehearsal space for more complex immersive sound experiments. By selecting clips that varied in semantic density: some richly referential, others abstract or culturally ambiguous. I wanted to observe not only what participants could identify, but how they coped with uncertainty. Would they hesitate, invent, rationalise, or reject? In doing so, I wasn’t testing memory or recognition, but the cognitive elasticity of the listener when stripped of visual cues. As my methodology is later described, the goal was never generalisability, but it was generativity: what forms of meaning-making emerge when sound is the only available map?

For me personally, as a practitioner with unilateral hearing loss, the experiment highlighted the embodied politics of perception. While my participants described binaural separation and reverberation effects, I often experienced a flattened, monaural space. This difference did not make the data invalid; conversely, it made it plural. As Sterne (2012) notes, sound is always situated listening, never universal, always context-bound.Finally, the experiment suggested a future design challenge: if we expect museum visitors to use sound to navigate digital heritage, we must also teach them to listen differently. Using Deep listening: A composer’s sound practice by Pauline Oliveros (2005) as an example, I believe listening is not passive reception; it is a learned skill, and museums need to consider auditory literacy as part of their digital curation strategies.

The Actual Source of the Recordings

Here are the answers to where those sounds you just listened to were actually recorded from:

- Mining

- Fireworks

- Washing machine

- Pepper mill

- Mokka mill

- Spring forest

- Summer forest

- Autumn forest

- Winter forest

- Burning wood

- Busy crossroad

- Sewing machine

- Thunder

- Car engine

- Chewing food

- A busy road (on the left) next to a forest (on the right)

How many did you get right?

Reference

Baumann, M.P. 2015. The ear as organ of cognition: prolegomenon to the anthropology of listening. In: Baumann, M.P. ed. VIIth European Seminar in Ethnomusicology, 1-6 October, 1990, Berlin. [Online]. Wilhelmshaven: Wilhelmshaven, pp.123-141. [Accessed 10 April 2025]. Available from: https://fis.uni-bamberg.de/handle/uniba/37081

Berger, J. 2008. Ways of Seeing. London: Penguin UK.

Blesser, B. & Salter, L.R. 2007. Spaces Speak, Are You Listening? Experiencing Aural Architecture. London and New York: MIT Press.

Cliffe, L., Mansell, J., Greenhalgh, C., & Hazzard, A. 2024. Materialising contexts: virtual soundscapes for real-world exploration. Human-Computer Interaction. [Online]. [Accessed 29 April 2025]. Available from: https://doi.org/10.48550/arXiv.2412.08227

Oliveros, P. 2005. Deep listening: A composer’s sound practice. Lincoln: IUniverse.

Sterne, J. 2012. MP3: The Meaning of a Format. Duke: Duke University Press.

Sturken, M. & Cartwright, L. 2009. Practices of Looking: An Introduction to Visual Culture. 2nd ed. Oxford: Oxford University Press.

Verhulst, I., Hemming, R., Ganz, A., Bennett, J., Donnelly, R., Watling, D., & Dalton, P. 2024. [Pre-print]. Predictors of the Sense of Presence in an Immersive Audio Storytelling Experience: A Mixed Methods Study. Human-Computer Interaction. [Online]. [Accessed 29 April 2025]. Available from: https://doi.org/10.48550/arXiv.2406.05856

Original freesound.org and YouTube links to sounds are used for the sound test:

Sound 1: https://freesound.org/people/TravisNeedham/sounds/135121/

Sound 2: https://freesound.org/people/Suso_Ramallo/sounds/151633/

Sound 3: https://freesound.org/people/Ama_Dis/sounds/156397/

Sound 4: https://freesound.org/people/ancorapazzo/sounds/181631/

Sound 5: https://freesound.org/people/Jaturo/sounds/193492/

Sound 6: https://freesound.org/people/klankbeeld/sounds/273162/

Sound 7: https://freesound.org/people/klankbeeld/sounds/725797/

Sound 8: https://freesound.org/people/klankbeeld/sounds/544126/

Sound 9: https://freesound.org/people/klankbeeld/sounds/616354/

Sound 10: https://freesound.org/people/CRAFTCREST.com/sounds/277463/

Sound 11: https://freesound.org/people/gladkiy/sounds/458625/

Sound 12: https://freesound.org/people/kyles/sounds/407112/

Sound 13: https://freesound.org/people/jgrzinich/sounds/574696/

Sound 14: https://freesound.org/people/Dan_AudioFile/sounds/614698/

Sound 15: https://youtube.com/shorts/8IsG7zbcDik?si=ekf2njw2CbSjREe2