Date: 01/07-30/10/2024

Project Showcase

Virtual Exhibition Experiment Process and Guidance:

English Version:

Chinese Version:

Experimental Group – Sound Practice Content:

Apps used for phase 1 (please refer to the Sound Practice Toolkit section below)

- Metronome Beats:

Google download link: https://play.google.com/store/apps/details?id=com.andymstone.metronome&hl=en_GB&pli=1

Apple Store download link: https://apps.apple.com/us/app/metronome-beats-pro/id1486779670

- Frequency Generator:

Google download link: https://play.google.com/store/apps/details?id=com.luxdelux.frequencygenerator&hl=en_GB

Apple Store download link: https://apps.apple.com/us/app/frequency-sound-generator-app/id1238160553

Sound Practice Audio (The audio is partially binaural. Please make sure the setting on your equipment supports binaural sound play):

English Version:

Chinese Version:

Control Group – Sound Test Audio (The audio is partially binaural, please make sure the setting on your equipment supports binaural sound play):

Questionnaire, Interview and Question Sheet for Sound Practice and Sound Test Data Collection:

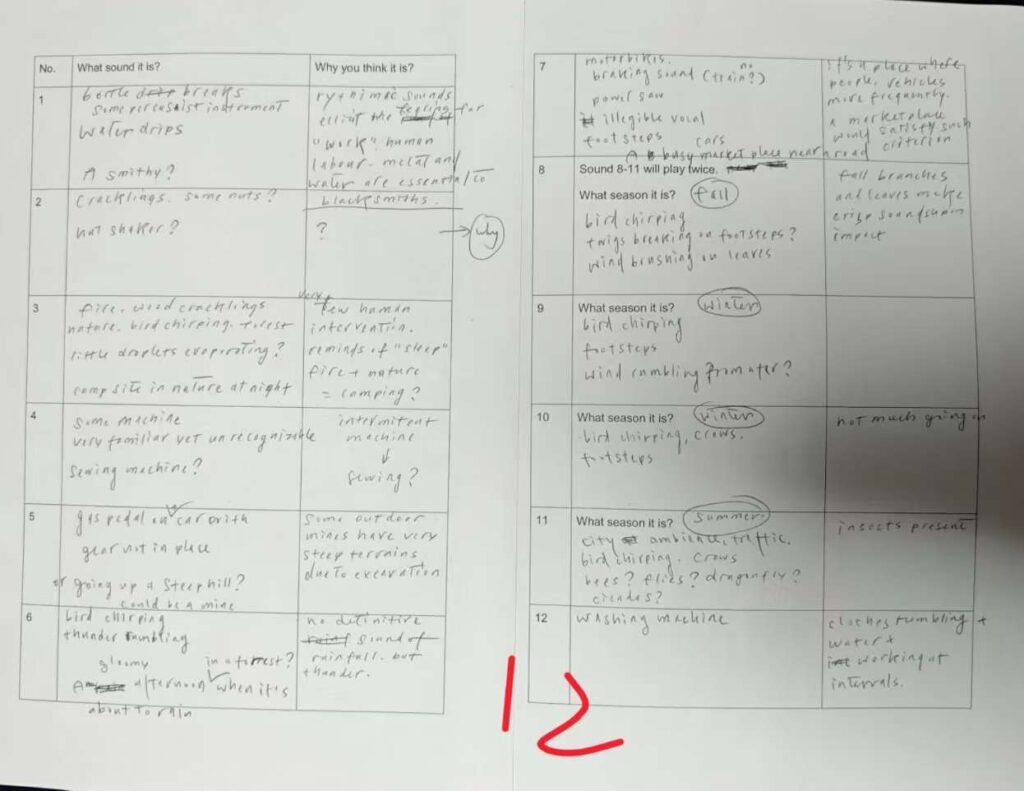

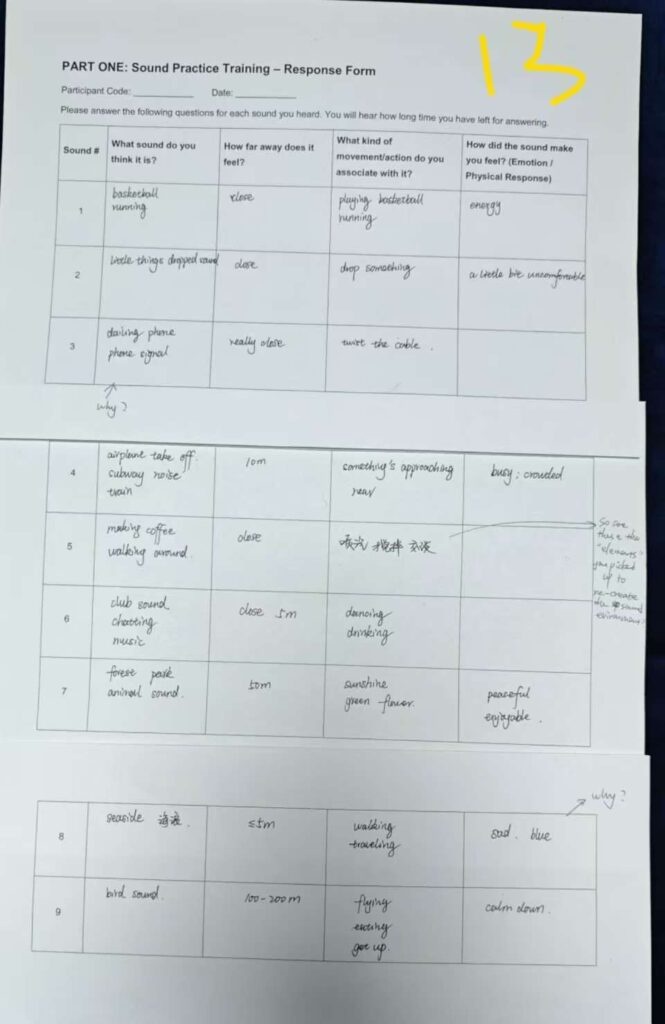

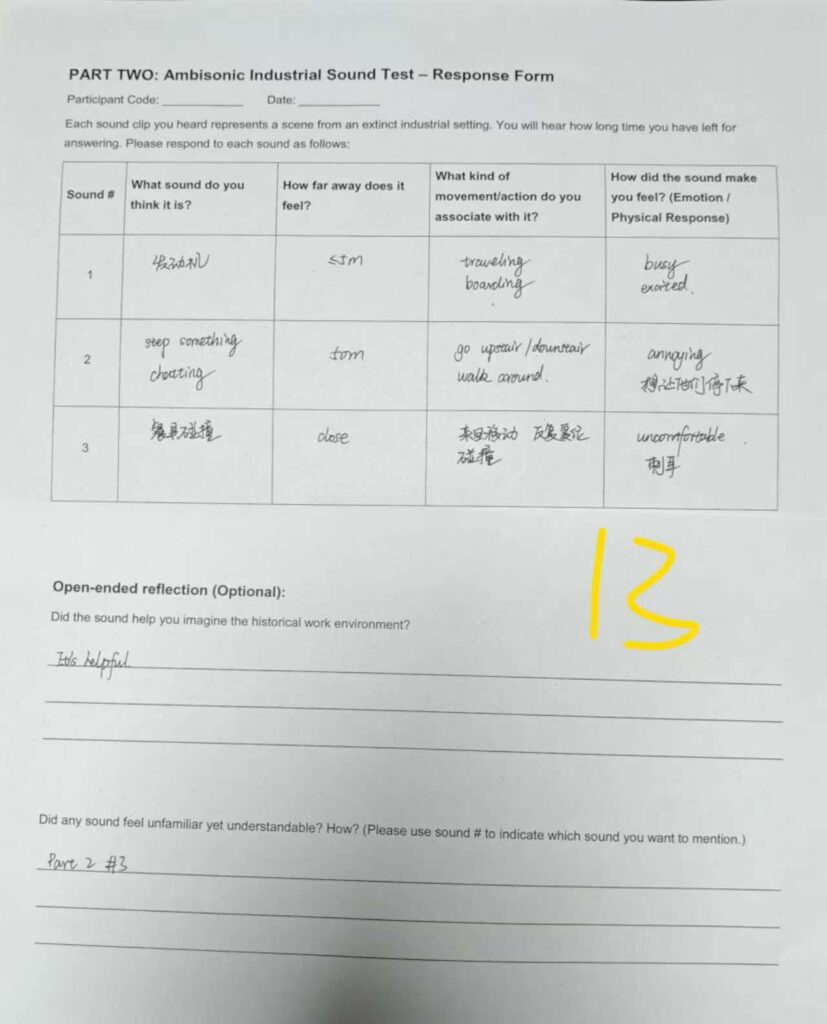

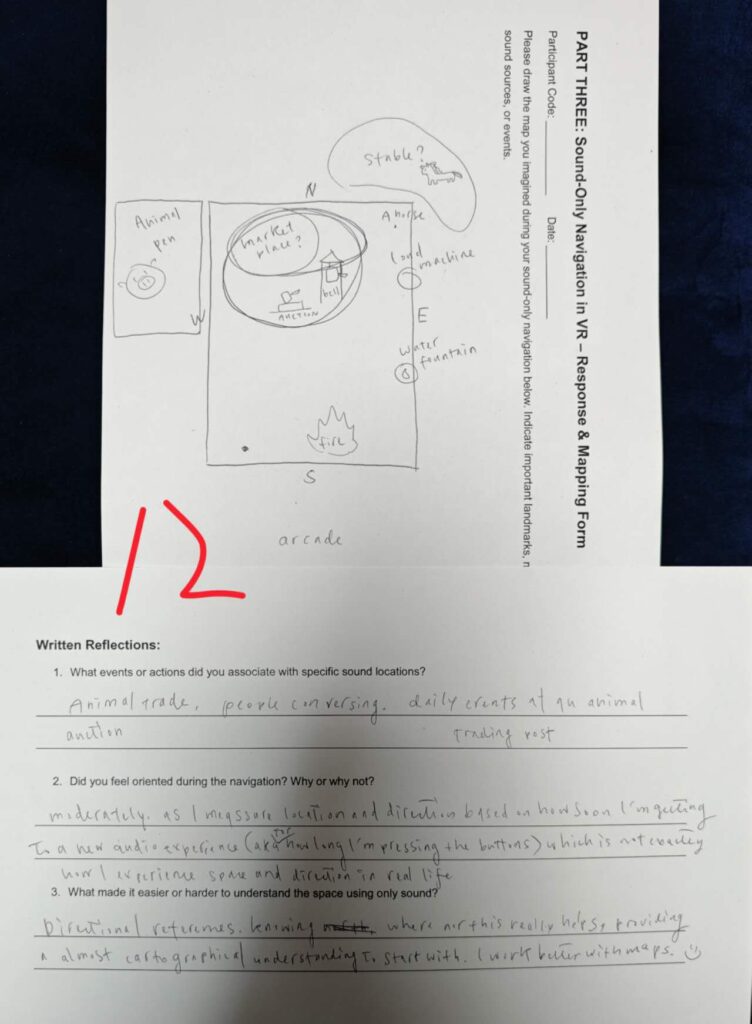

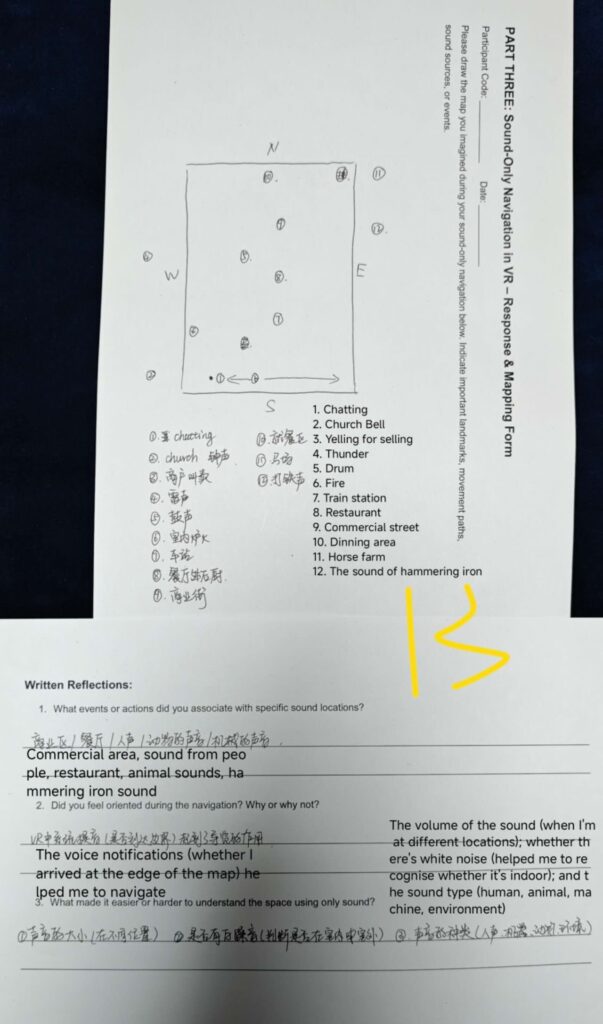

An example of part 1 and 2 answer sheets from different groups (open image in a new tab to see details)

An example of maps created by different groups

VR Tour:

Please download to a PC from here.

Downloading instruction

- You will need a VR headset that can be connected to the PC that you will use to view the project.

- Download the original file.

- Download the Epic Games Launcher. Launch the software and download Unreal Engine version 5.3.

- Open Unreal Engine, go to Browse, find the project file and click Open.

- Click the Play button on the toolbar (usually in the middle of the top of the screen, looks like a small triangle ▶️).

- Navigate the game with physical movement, and interact with the model with hand gestures (hand gestures are shown below)

- If you cannot access the tour, please feel free to contact Yuan to arrange an in-person experience via email ([email protected]) or via the Contact Me page. Only available before the 30th of October 2025.

If you cannot download or view the original file, please have a look at the video tutorial for downloading and representing the project below.

Due to the iterative feature of my research, I developed 3 versions of the VR tour based on the feedback from the first three groups (details are shown in the reflective diary below). This minimised the vision elements and constraints in the project, and proved that I underestimated/did not need to worry about whether participants could navigate through the map without enough visual information.

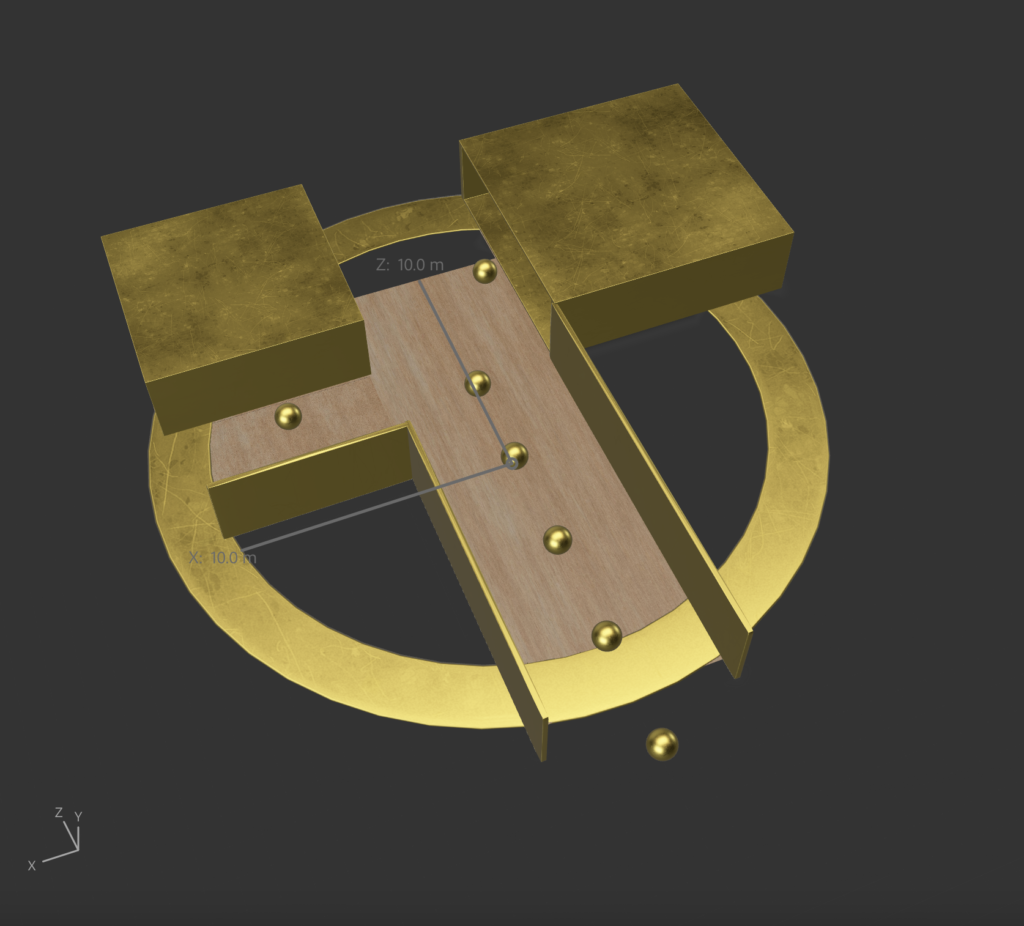

VR tour version 1: (initial version was delivered through Omnideck at the Helix Building)

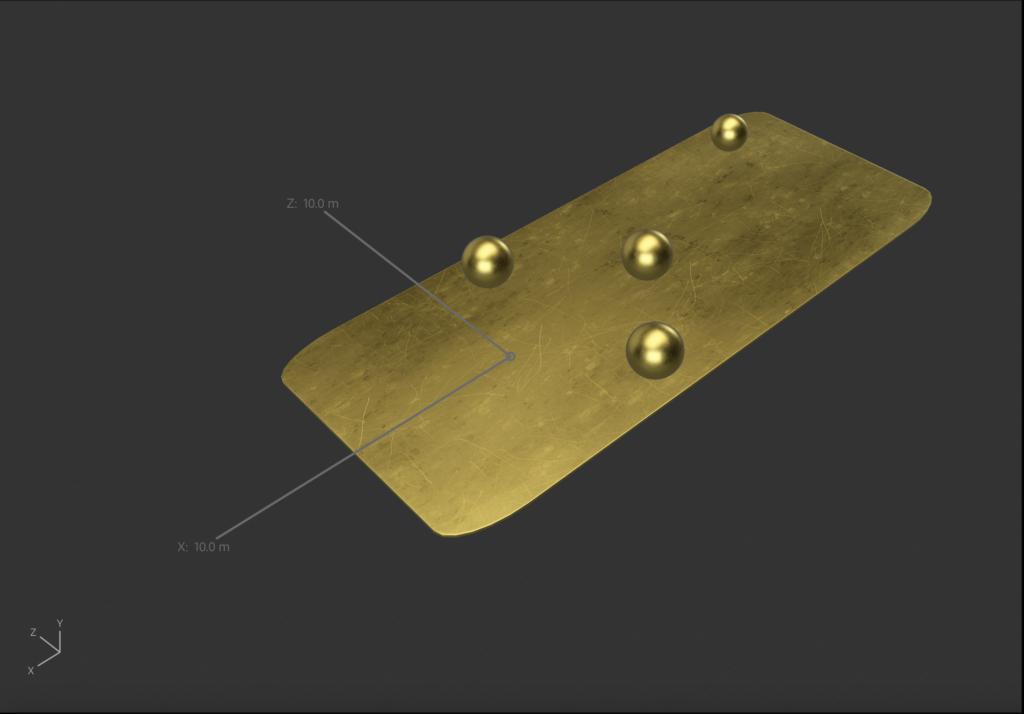

VR tour version 2: (from this version, the tour was delivered through PC)

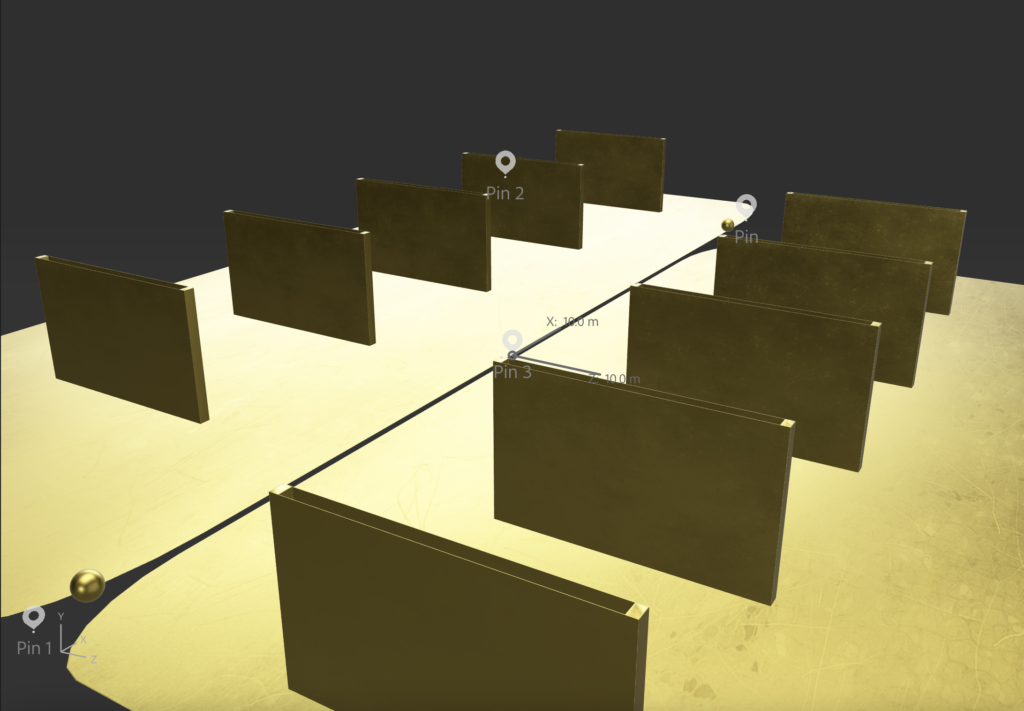

VR tour version 3: (the walls have been taken down in this version)

AR Exhibitions:

How to download and view:

- Download Adobe Aero to your smartphone. If you are using an iPhone, please search for the app in the App Store; if you are using an Android phone, please download here.

- You might need to log in to the app with an Adobe account.

- Download the file from the Google Drive link: https://drive.google.com/drive/folders/1wRgiU0CuzUzWRJkIrEHYIU8NVpssypKQ?usp=drive_link. Then open it via Adobe Aero. It might take some time to load.

- After opening, you will be asked by the app to find a big flat area in reality for placing the 3D model.

- Please do not change any settings, just click the Preview button (as shown in picture 1 below) to start viewing. If you changed any settings by any chance, please close the app directly without saving. Please feel free to walk around the model and use your phone screen as a window that connects reality and virtuality.

- There are two buttons on the side of the model for you to experience different sound environments. By activating them, you need to look at the button on your phone and physically move close to the button, then click the button with your finger. The sound will stop when the loop finishes playing. You can click it again if you would like to listen more times.

If you cannot download or view the original file, please have a look at the video tutorial for downloading and representing the project below.

AR tour 1

Floor map:

Video demonstration:

AR tour 2

Floor map:

Video demonstration:

AR tour 3

Floor map:

Video demonstration:

Data analysis (CGT) process and coding example:

https://docs.google.com/document/d/1QHt1GtuYRLxmRYFtrfwZI9lb4R-l1Mqks319m-8lahw/edit?usp=sharing

Reflective Diary

The previous nine practices created a complex picture of sound’s role in digital heritage. The potential for sound to enhance presence, emotional connection, and even guide exploration (sonic wanderability) became increasingly clear (especially in practices 6, 8 & 9). However, a significant challenge also emerged: The inherent ambiguity and subjectivity of sonic interpretation (the same sound can mean entirely different things in different contexts or interpreted by different people). As revealed in practices 8 and 9, sound can provide navigation in a smaller space and build an emotional connection, which leads to a real-world behavioural reaction to the space, even though the visual contents are obviously not real.

This highlighted a critical curatorial dilemma: how can museums leverage sound’s evocative power for lost heritage without overwhelming or misleading the audience? Will the Sonic Wanderability and Affective Synthesis still function when there are multiple sound cues in a larger space, when visual information does not exist? How can the process of understanding the environment of lost heritages through sound alone be interpreted? Can AI-generated industrial sound provide an understanding and trigger emotions of a lost industrial environment?

Those questions motivated the design of this practice and led directly to the conduct with two cohorts, control groups and experimental groups. The primary focus of this practice is to explore the questions mentioned above to contribute to RQ 2, whilst the experimental group is for a hypothesis that emerged from practice 7 which refers to Deep listening: A composer’s sound practice by Pauline Oliveros (2005) that asks could a structured, preparatory sensory intervention, the Sound Practice toolkit developed from previous practice insights, provide the necessary introductory cognitive scaffold to significantly enhance participants’ ability to navigate, interpret, and derive meaning from a sound-led virtual heritage environment with minimal/no visual cues? If so, how effective can it be? How sustainable is the effect? Moreover, I discussed the question raised in practice 5 (will online museums replace offline museums and why) with participants who had a visitors’ perspective, for small and medium museums to refer to.

The experiment was designed to compare the experiences of two groups engaging with three AR (industrial environmental sound generated by AI based on the description collected from Calderdale Industrial Museum volunteers) and one original sound-based VR environmental experience (which represents an old outdoor market made with sound cues recorded by myself mainly, with two are from Dave Lynch, and industrial & nature related sounds from freesound.org). They delivered a navigable auditory map of a lost industrial environment with minimal/no visual cues. All participants were told to view four online museums that were picked from my initial empirical analysis (see details in my research thesis 2.2.1) before attending the experiment. These were the British Museum, Anne Frank House, Nottingham Industrial Museum, and Calderdale Industrial Museum (CIM has a virtual tour now, but this experiment was conducted before that). The Control Group (n=8) would partake in the experience directly after completing a sound interpretation test that replicated the method from Practice 7 with similar content (the chewing sound and single sound cues were removed, but added cultural and industrial-related sounds). The Experimental Group (n=8) would first participate in the Sound Practice toolkit, a structured sensory and knowledge preparation/introductory session, before entering the same VR environment.

Initial tests with early participants revealed unexpected but highly productive frictions. The use of an Omnideck treadmill and the standard VR headset audio created a significant cognitive load. Participants reported spending more energy on physical balance and adapting to the technology than on listening. This technical unfamiliarity and the instability of the spatial mapping created a disorienting experience of walking in a void, which prevented receiving a sense of immersion. However, from my point of view, this ‘failure’ was not purely negative. It was in these moments of frustration that participants began to ask the most critical questions: “Why am I being made to walk and listen like this?”, “Why isn’t the sound shifting naturally as I turn?”, “Sitting on a chair to experience the VR tour, like the sound interpretive test, can be a lot better” (from participants). This involuntary “exit from immersion” became a powerful illustration of immanence that forced participants to reflect on the medium itself and the logic of its construction. While this interfered with the experiment’s primary goal of testing the toolkit, it provided invaluable data for the thesis’s broader critique of immersion (Schrimshaw, 2015).

Consequently, suggestions from participants were used to iterate the VR experience to achieve a purer assessment of the sound practice’s effect. I modified the experience, simplifying the interaction to keyboard-based movement within a visually abstract, empty room. The results from this modified experiment were striking. As evidenced by their ability to later draw a map of the virtual space and its events, the experimental group, who attended the sound practice, navigated the soundscape with significantly greater speed (just over 20 minutes) and better interpretation of the space. They were more adept at using sound cues for spatial orientation and identifying narrative events. Conversely, the control group of participants were more frequently disoriented and expressed frustration with the lack of visual information, often stating that they needed more visuals or a longer time to better understand the audio (one participant from the control group took nearly 1.5 hours to create the map).

This marked difference strongly supports the hypothesis that the Sound Practice toolkit functioned effectively as a cognitive scaffold. An experimental group participant from Group 6 explicitly reported feeling more sensitive to sonic details (such as frequency ranges) after the practice. Crucially, this heightened sensitivity wasn’t merely analytical; it also fostered empathy, allowing her to connect the harsh industrial sounds experienced in the AR section to the potential lived reality of the workers (Practice 6). This suggests the toolkit initiated or enhanced what I term Affective Synthesis from the audience’s perspective: the cognitive and emotional process where listeners stitch together decoded sonic elements, personal memories (both direct and indirect), cultural associations, and emotional responses into a coherent and meaningful experience instead of a factual replication. The Sound Practice seemingly equipped participants with better tools not just to hear and, but also introductory knowledge for them to understand industrial sounds better and to listen deeply and synthesise meaning more effectively and efficiently.

Follow-up questionnaires (questions are rated 1-5, 1 represents strongly disagree, 3 is neutral, 5 is strongly agree) from the Experimental Group who attended the Sound Practice provided further nuance regarding the effectiveness and sustainability of the toolkit’s impact. Seven days after the session, participants generally reported strong positive effects, aligning with the initial observations. Many strongly agreed (scoring 4 or 5 out of 5) that their perception of background and spatial sounds (distance/movement) had become more acute, they felt more confident identifying unfamiliar sounds, and they found themselves actively using sound to imagine or understand spatial environments. The feedback echoed this, with one participant stating that the Sound Practice “increased [my] sensitivity to mechanical noise” in daily life, leading them to using earplugs, while another mentioned paying “more attention to sounds from different directions [in Chinese: 经过关于方位的声音测试后有更有意留意不同方位的声音对自身地理认知的细致影响]” and realising sound’s role in spatial awareness, something they “hadn’t paid much attention to before [in Chinese: 以前没有太留意声音在定位上的感知作用]”. Some also reported clearly remembering specific sounds from the experiment (scores of 4 or 5).

However, translating this heightened awareness into consistent behaviour in new situations proved less pronounced, with scores for consciously using sound in unfamiliar environments averaging lower (around 3). Furthermore, the 21-day follow-up indicated a noticeable attenuation of these effects. While recall of the practice itself remained relatively strong (most scoring 4), and awareness of sound’s spatial characteristics often persisted (scores varied but frequently 4 or 5), the self-reported change in general listening habits dropped significantly (most scoring only 2), as did the perceived lasting impact on overall sensory processing (scores ranging from 1 to 3). As one participant reflected, while guidance makes one conscious of sound (in Chinese: “只要做了一定的引导,人们就会有意识和视角去关注”), maintaining that focus without external prompting is difficult (in Chinese: “…很难说是否声音练习对我有长久的影响”). Thus, while the Sound Practice toolkit provides a significant initial boost in auditory sensitivity, spatial awareness, and potentially empathy, facilitating a richer Affective Synthesis in the immediate aftermath, its capacity to induce sustained, long-term changes in everyday listening habits appears limited for most participants without ongoing reinforcement. Nonetheless, the feedback shows that Sound Practice raised awareness of sound’s spatial and evocative potential, sparking continued interest in concepts like gamified online museum experiences among some participants even weeks later.

Aside from this, the VR navigation task itself provided rich data on Sonic Wanderability. The VR shows the capacity of sound for self-directed exploration and meaning-making within a sound-dominant environment. When participants are liberated from overwhelming or misleading visual cues (like the Omnideck scenario, mentioned in Group 1), most of them demonstrated interestingly active exploratory strategies. Some of them adopted systematic methods, using a “Roomba” scanning pattern (Groups 5, 6) using my voice notification (at the edge of the map, they can hear my voice saying “you have reached the [compass direction] of the map”) to cover every corner of the map to ensure no detail was missed. Others employed a more curiosity-driven approach, navigating towards intriguing sounds like the bell (Group 6) and using prominent sonic events (like the market chatter) as landmarks or central reference points (Groups 3, 6, 2). Surprisingly, one participant (Group 5) described navigating partially through tactile memory (“how long I pressed the key”), indicating that Sonic Wanderability can be an embodied, multi-sensory process even without dominant visuals.

However, the challenge of visual dominance still exists, even though I have tried to control it in a minimal form. The abstract boundary walls in the VR environment demonstrably constrained auditory perception for some. One participant (Group 2) reflected that the walls prevented her “thoughts [from going] beyond the wall,” leading her to incorrectly assume all sounds originated within the mapped area. I then removed the wall and used the initial setting of Unreal Engine; however, Group 6 participants debated whether the blue sky implied an outdoor setting, showing how minimal visuals still trigger powerful, potentially misleading, spatial assumptions. This aligned with the feedback from Group 1 participants. Furthermore, the eldest participant (Group 8), strongly self-identifying as visually oriented, struggled significantly with navigation, highlighting that Sonic Wanderability is not universally intuitive and may depend on prior experience (e.g., gaming) or cognitive preferences.

The exploration of Affective Synthesis yielded perhaps the most compelling insights, particularly concerning authenticity. The AI-generated industrial sounds in the AR component were overwhelmingly accepted as “real” or “authentic” by participants across multiple groups (Groups 2, 3, 4, 6, 7, 8). This acceptance wasn’t based on technical fidelity alone. Instead, it stemmed from the sound’s ability to trigger affective resonance, which evoked plausible, and sometimes stereotypical, imagery of industrial settings (Groups 6, 7).

The sound connected powerfully with participants’ personal histories, triggering vivid, often cross-modal, memories. For example, the smell of manure linked to horse sounds (Group 2), the visual recall of mill shuttles from AI factory noise (Group 8), or even childhood street sounds (Group 3).

One participant from the control group of Group 5 mentioned that the lack of clarity in the AI sounds actually enhanced their perceived authenticity, feeling closer to the complex noise of real life than the clearer, elementally distinct sounds from the initial sound test. This aligns with the idea of plausible fiction, (which is the outcome of Affective synthesis, discussed previously in practice 8) that the experience felt “true” because it resonated emotionally and experientially, regardless of its objective origin. Group 7’s participant articulated this perfectly: “It obviously doesn’t have to be authentic because I thought it was real.” This suggests that for Affective Synthesis to occur, the primary requirement is emotional or experiential authenticity, not necessarily factual or technical authenticity.

However, the perceived authenticity which underpins Affective Synthesis proved to be a complex and negotiated process, heavily dependent on the listener’s background and prior knowledge, which echoes with insights from Practice Log 9. The reaction to an AI-generated Yorkshire accent (initially discussed concerning Group 2) served as a great example: for those unfamiliar with the region (‘outsiders’), the sound might confirm/enhance a pre-existing stereotype based on their knowledge of the context, thus feeling functionally authentic in establishing a sense of place. However, for the participant with deep lived experience (‘insider’), the same sound felt “weird”, as it failed to capture the known diversity and nuance, simplifying reality (“Yorkshire has many different accents”), then the illusion broke. This highlights that negotiated authenticity is fragile and highly dependent on the listener’s background. Additionally, individuals from China in Group 6 readily identified using “stereotype [in Chinese: 刻板印象]” or “symbolic meaning [in Chinese: 象征意义]” as interpretative shortcuts. In this group, Speaker B likened it to associating high heels with women, while Speaker A linked the voices heard in the AR industrial setting to her “stereotype of white working-class men”. They reflected on “my own bias or stereotype [in Chinese: 我自带的偏见或者刻板印象]” that was brought into the listening and interpreting process (Group 6, Speaker B). Similarly, Group 3 participants confidently placed the VR market scene in historical periods like “medieval” or “Republican China” based on stereotypical sound cues like “horse carriage”, “bell sounds”, and “early train sounds”, with one explicitly referencing the game Assassin’s Creed as their reference. Even indirect experiences from games and films were cited as valid resources for decoding unfamiliar sounds like mining (Group 1), further demonstrating how pre-existing mental frameworks, stereotypical or not, are all crucial for making sense of sonic information. This shows the importance of pre-existing knowledge for achieving a better understanding, echoed by the design aim of Sound Practice.

Intriguingly, challenging simple notions of fidelity, one participant (Group 5) found the less clear, more complex AI industrial sounds (in AR) felt more authentic than cleaner test sounds, suggesting that sometimes a degree of ambiguity aligns better with expectations of real-world sonic complexity. More importantly, Group 6 also pointed out the dimension of trust and the perceived power relation with the institution/researcher. They often tend to accept the presented sounds as authentic/legitimate, potentially lowering their critical threshold for questioning authenticity initially. This complex interplay between the sound itself, the listener’s mental framework (shaped by direct experience, indirect media exposure, and stereotypes), and their trust in the source ultimately determines whether authenticity is successfully negotiated and Affective Synthesis can fully occur.

Beyond these core theoretical discussions, the interviews illuminated several crucial perspectives relevant to the broader questions raised in the previous practices that motivated this practice, particularly the relationship between online and offline museum experiences, which is a key concern for small and medium museums (emerging from Practice 5):

The majority of participants explicitly stated they would not want online museums to replace offline ones, or they do not think online museums would replace offline ones. A powerful articulation of this came from a Group 6 participant in follow-up feedback: she stressed that physical museums are not just “containers for exhibits [in Chinese: 展品的容器]” but vital “cultural spaces [in Chinese: 文化空间]” and integral parts of the “community”. For locals, places like the British Museum or Leeds Art Gallery become part of “daily life”, revisited repeatedly not just for changing exhibits but for the “social, experience, and embodied” connection they offer. It links to a sense of belonging and a space for shared activity, especially for families (“definitely need to take children in person [in Chinese: 肯定是要带孩子们亲历啊] “). She argued passionately that online museums cannot replicate this “placemaking” function. If physical museums fail in their social engagement and cease to be attractive community hubs, then they risk being perceived as replaceable by online alternatives [original data, partially in Chinese: “如果博物馆在social engagement和events上做得不行,让观众觉得和自己没关系或者很无聊,那才会被线上取代,因为他们对local community变成了没有吸引力的space,甚至一次性可替代”]. This means the failure lies with the physical, not the strength of the digital. This resonates with Group 8’s emphasis on the unique value of seeing the physical artefact (the bookcase) within its context, supported by narrative.

Moreover, the Group 6 participant also highlighted the restriction of “autonomy” inherent in many online tours, contrasting the pre-determined routes and fixed perspectives with the freedom of physical presence. She struggled to find the right word but pointed towards “embodiment [in Chinese: 那种体验……我与空间融合的]”. It is a feeling of merging with and actively choosing one’s path within the cultural atmosphere, even independent of specific exhibits, which partially reflects the Sonic Wanderability in the VR that allows participants to freely explore the space. This lack of embodied agency online was a key differentiator. While online experience offers accessibility (especially for the housebound, Group 7), focused study without distraction (Group 1, Speaker A), and sometimes easier navigation within complex layouts (Group 4), it fundamentally lacks the embodied freedom and social dimension of the physical visit.

Given that online experiences cannot fully replicate the embodied and social aspects of the physical museum, participants leaned towards valuing what online experiences can do uniquely. This directly links to participants’ preferences that were observed for interactivity and storytelling in this experiment. Participants frequently invoked game mechanics (Groups 1, 2, 3, 5, 8) – valuing the freedom to explore (Sonic Wanderability showed as desired user agency), solve puzzles (G5), or follow engaging narratives (G5, G6, G8). For small and medium museums, this implies a crucial strategy: rather than attempting costly, often unsatisfactory, high-fidelity replicas of their physical space, they might find greater success by leveraging digital platforms to create unique, engaging, interactive, and narrative-rich experiences that play to the strengths of the medium and offer something genuinely different from a physical visit. Combining this finding with the feedback from Group 1 (about the failure of using Omnideck) highlighted that it is not how advanced technologies are that decide what a good online museum is, but how engaging the content is.

Delving deeper into how participants made sense of the soundscapes, the process consistently mirrored the puzzle-solving model hypothesised in Practice 7 (Observation – Highlighting Unusuals – Matching Memories – Interpretation). Participants from control groups did not passively absorb the audio, and in contrast, they actively analysed it.

After they observed individual sound elements, they appeared to filter this input, focusing on distinct, salient, or sometimes contradictory elements. For instance, in Group 1, the co-presence of “metal working” (打铁) and “dripping water” (水声) was marked as “strange” (奇怪的地方), prompting further investigation. Similarly, participants noted specific animal calls (Group 1’s crow/geese, Group 3’s sheep/dogs), distinct human actions like “breaking a box” (Group 8, Speeker A), or the unique quality of a sound, like the AI industrial noise being perceived as less clear but more authentic (Group 5). Furthermore, they started matching the sound with memories in a very short time (I only left them one minute to write the interpretation of each sound, but none of the participants asked for extra time, and all finished writing before time ended), and a list of answers showed how they interpreted sounds during listening (see an example of one answer sheet below). This list of answers under one sound shows that the Observation – Marking Unusuals – Matching Memories – Interpretation process is repeated and self-corrected. It became certain until it cannot be corrected anymore.

In conclusion, this practice validated the Sound Practice toolkit as an effective cognitive scaffold, significantly boosting participants’ immediate ability and understanding to navigate soundscapes (Sonic Wanderability) and synthesise meaning (Affective Synthesis). Follow-up revealed strong short-term gains (7 days) in auditory sensitivity and spatial awareness. However, significant long-term changes in daily listening habits were less evident (21 days), which suggests the Sound Practice primarily boosts immediate capability and awareness rather than inducing lasting behavioural shifts, though heightened awareness of sound’s spatial qualities often continues.

The core mechanism observed was Affective Synthesis, where listeners stitch sound, memory (direct/mediated), and cultural context into subjectively meaningful experiences. This process prioritises emotional/experiential authenticity over factual origin, explaining the broad acceptance of AI-generated sounds when they achieve affective resonance. But authenticity remains a fragile, negotiated concept dependent on listener background and trust. Sonic Wanderability, while challenged by visual dominance and individual differences, proved viable through diverse exploratory strategies.

Participants confirmed the distinct, complementary roles of online/offline museums, also rejected replacement and valued unique, interactive, narrative-rich online experiences over replication only. Ultimately, the research underscores sound’s foundational power to structure navigation and foster a deep, affective engagement with digital heritage, transforming virtual visits into personally resonant encounters.

The Sound Practice Toolkit

The following toolkit was designed and implemented as the experimental intervention in Practice 10. It is a structured, preparatory session, lasting approximately 40 minutes, intended to be facilitated by a curator or researcher before a visitor engages with a sound-led exhibition. This process can also be delivered via a pre-recorded audio guide to replace a live facilitator. Its purpose is to attune the participant’s senses, activate personal memory, and provide a cognitive framework for interpreting unfamiliar soundscapes. In other words, it aims to bridge the gap between everyday listening and the focused, deep listening required for a sound-based cultural heritage experience.

The toolkit’s design was inspired by Pauline Oliveros’ (2005) influential concept of Deep Listening, which emphasises the cultivation of expanded auditory awareness through sound concentration and meditation. Oliveros’ practice is typically centred on prolonged concentration and is often aimed at musicians, performers, or spiritual practitioners (see Appendix at the end of this page for Oliveros’ sound practice examples). However, no listening exercises of scholarly interest have been identified that centre on online museum visits and activate visitors’ auditory senses. Therefore, in this study, the original framework was adapted to better suit short-term cultural encounters in digital or museum environments.

Materials and Setup:

The materials required for this practice were intentionally kept simple to enhance its controllability, replicability, and potential for iteration. The setup includes a quiet room free from odours, comfortable seating, headphones for each participant, and a pre-curated list of sound excerpts. The materials and guide prompts mentioned below are detailed in the appendices of this thesis.

Phase 1: Sensory Attunement (Average duration: 17 minutes)

Step 1: Grounding and Spatial Awareness (Average duration: 2 minutes)

- Materials: A metronome, a pitch generator, and the sound of a handclap.

- Facilitation: The facilitator uses these sound sources to guide participants, with their eyes closed, in identifying the location and distance of sounds within the room, establishing a baseline for spatial audio awareness.

- Rationale: To shift the participant’s primary sensory modality from visual to auditory and to establish a conscious connection between sound and spatial perception.

Step 2: Connecting Internal and External Perception (Duration: 15 minutes)

- Materials: A guided meditation audio track, revised from the Into the Senses meditation by James Taylor of the University of Leeds Wellbeing Service (https://mymedia.leeds.ac.uk/Mediasite/Play/3a3b6061e16b46c98ac30b0c4afd31541d).

- Activity: Participants are guided through a meditation that focuses on grounding and relaxation by activating sight, touch, sound, and internal bodily awareness.

- Rationale: To ground and relax participants, preparing them for a state of focused and receptive listening by fostering a connection between their external environment and internal sensory state.

Phase 2: Focused Listening (Average duration: 20 minutes)

Instruction: In this phase, after listening to each sound, participants are asked to briefly write down: (1) What do you think this is? (2) What specific sonic cues (e.g., echo, rhythm, pitch) led you to that conclusion?

Step 3: Evoking Personal Memory (Average duration: 10 minutes)

- Materials: A curated selection of sound excerpts that might be experienced in childhood, youth, and middle age, as well as sounds from natural, public, and domestic environments.

- Activity: Participants listen to the sound clips and document their interpretations and any memories or feelings evoked.

- Rationale: To activate the participant’s personal, affective sound library and to explicitly link auditory memory with a multisensory response, demonstrating that sound is never experienced in isolation.

Step 4: Pre-listening to Key Sonic Elements (Average duration: 10 minutes)

- Materials: 2-3 key individual sound elements that will feature prominently in the main VR experience (e.g., the specific sound of a particular machine, a recurring ambient tone).

- Activity: The facilitator plays the key sounds and explicitly tells participants what they are (e.g., “This is the sound of a moquette loom you will hear later”).

- Rationale: To prime the participants by pre-loading their short-term auditory memory with key sonic anchors. This reduces the cognitive load of interpreting completely unfamiliar sounds during the main experience, allowing them to focus on narrative and spatial understanding.

Phase 3: Final Briefing (Average duration: 3 minutes)

Step 5: Setting the Intention

- Instruction: “You are about to enter a virtual space where sound is your primary guide. The visuals will be minimal. Your task is not to ‘see’ the past, but to ‘listen’ to it. Use the sounds you hear to navigate, to imagine the environment, and to piece together the story. There are no right or wrong answers, only your own experience”.

- Rationale: To manage expectations and provide a clear cognitive frame for the upcoming experience, thereby empowering the participant as an active co-creator of meaning.

Reflective Methodological Note

When I review the composition of the participant groups, an aspect worth reflecting on is that the proportion of elderly participants is low in this research. Although an eighty-year-old couple (Group 8) attended this research, the majority of participants were aged 22-30. This reflected the accessibility of recruiting younger participants online than the elderly.

Meanwhile, the VR/AR technology interfaces I employed may present varying levels of accessibility for users of different ages and technical proficiency. The challenges encountered by Group 8 of the participants during VR navigation show that the technology itself may impose additional adaptation requirements for certain groups, particularly older individuals with limited digital experience.

Therefore, the theoretical perspectives developed in this research, such as Sonic Wanderability and Affective Synthesis, primarily reflect the interaction patterns and cognitive frameworks of younger cohorts. Older individuals, owing to their distinct life experiences, memory structures, and potential sensory differences, may exhibit unique patterns in sound perception and virtual space interaction. This means the applicability of current theories in interpreting user experiences across different age groups needs further examination.

Bibliography

Schrimshaw, W. 2015. Exit Immersion. Sound Studies. [Online]. 1(1), pp.155–170. [Accessed 30 January 2025]. Available from: https://doi.org/10.1080/20551940.2015.1079982

Oliveros, P. 2005. Deep listening: A composer’s sound practice. Lincoln: IUniverse.

Appendix

Here are some examples from Oliveros’ sound practices:

Breath Improvisation (Oliveros, 2005, pp.10-11)

Standing in a circle, bring your attention to your breath. With short or longer puffs

of air use the sound of breaths to improvise a playful piece interacting with others for

three to five minutes. Try not to vocalize the breaths. Notice any differences that you

feel after the improvisation. Also reflect on the rhythms, texture and shape of the

improvisation as if it were a composition.

Breath Regulation

Inhale through the nose for the count of 6. Hold for the count of 4. Keep the throat

relaxed. Exhale through a small aperture of the lips with a subvocalization of the

syllable ‘hahhhh’ for the count of 8. Relax and wait for the count of 4 and repeat the

cycle. Subvocalization of ‘hahhhh’ restricts the epiglottis and helps direct energy to

the lower abdomen.

Listening (Oliveros, 2005, p.12)

Sit either on the floor or in a chair.

If on the floor, use a cushion to raise the sitz bones.

If sitting in a chair, feet are flat on the floor.

The legs should be crossed either in full lotus position,

48 or tucked in close to the

body with the knees relaxed downward to the floor.

Posture is relaxed upper body, chin tucked in slightly, balanced on the ‘sitz‘ bones and

knees.

Palms rest on the thighs, or palms folded close to the belly.

Eyes are relaxed with the lids half or fully closed.

At the sound of a bell or gong listen inclusively for the interplay of sounds in the

whole space/time continuum. Include the sounds of your own thoughts. Can you

imagine that you are the center of the whole?

Use this mantra to aid your listening:

With each breath I return to the whole of the space/time continuum.

If a sound takes your attention to a focus, then follow the sound all the way to the end

as you return to the whole of the space/time continuum.

At the sound of the bell prepare to review your experience and describe it in your

journal.

Listening Journal (Oliveros, 2005, p.17)

After the listening exercise, describe your experience in writing in a notebook that

you keep for this purpose. Give your experience a title. Notice your feelings about

your experience and record them. Of course, writing can be replaced by another means

of recording to suit your needs (e.g. Tape).

Please be aware that there are commentary sections under each exercise. If you would like to know more about these exercises, I would strongly recommend you read Oliveros’ original work.